Mean imputation: So simple. And yet, so dangerous.

Perhaps that’s a bit dramatic, but mean imputation (also called mean substitution) really ought to be a last resort.

It’s a popular solution to missing data, despite its drawbacks. Mainly because it’s easy. It can be really painful to lose a large part of the sample you so carefully collected, only to have little power.

But that doesn’t make it a good solution, and it may not help you find relationships with strong parameter estimates. Even if they exist in the population.

On the other hand, there are many alternatives to mean imputation that provide much more accurate estimates and standard errors, so there really is no excuse to use it.

This post is the first explaining the many reasons not to use mean imputation (and to be fair, its advantages).

First, a definition: mean imputation is the replacement of a missing observation with the mean of the non-missing observations for that variable.

Problem #1: Mean imputation does not preserve the relationships among variables.

True, imputing the mean preserves the mean of the observed data. So if the data are missing completely at random, the estimate of the mean remains unbiased. That’s a good thing.

Plus, by imputing the mean, you are able to keep your sample size up to the full sample size. That’s good too.

This is the original logic involved in mean imputation.

If all you are doing is estimating means (which is rarely the point of research studies), and if the data are missing completely at random, mean imputation will not bias your parameter estimate.

It will still bias your standard error, but I will get to that in another post.

Since most research studies are interested in the relationship among variables, mean imputation is not a good solution. The following graph illustrates this well:

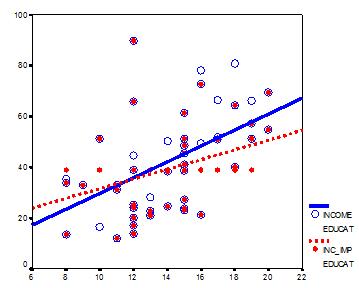

This graph illustrates hypothetical data between X=years of education and Y=annual income in thousands with n=50. The blue circles are the original data, and the solid blue line indicates the best fit regression line for the full data set. The correlation between X and Y is r = .53.

I then randomly deleted 12 observations of income (Y) and substituted the mean. The red dots are the mean-imputed data.

Blue circles with red dots inside them represent non-missing data. Empty Blue circles represent the missing data. If you look across the graph at Y = 39, you will see a row of red dots without blue circles. These represent the imputed values.

The dotted red line is the new best fit regression line with the imputed data. As you can see, it is less steep than the original line. Adding in those red dots pulled it down.

The new correlation is r = .39. That’s a lot smaller that .53.

The real relationship is quite underestimated.

Of course, in a real data set, you wouldn’t notice so easily the bias you’re introducing. This is one of those situations where in trying to solve the lowered sample size, you create a bigger problem.

One note: if X were missing instead of Y, mean substitution would artificially inflate the correlation.

In other words, you’ll think there is a stronger relationship than there really is. That’s not good either. It’s not reproducible and you don’t want to be overstating real results.

This solution that is so good at preserving unbiased estimates for the mean isn’t so good for unbiased estimates of relationships.

Problem #2: Mean Imputation Leads to An Underestimate of Standard Errors

A second reason is applies to any type of single imputation. Any statistic that uses the imputed data will have a standard error that’s too low.

In other words, yes, you get the same mean from mean-imputed data that you would have gotten without the imputations. And yes, there are circumstances where that mean is unbiased. Even so, the standard error of that mean will be too small.

Because the imputations are themselves estimates, there is some error associated with them. But your statistical software doesn’t know that. It treats it as real data.

Ultimately, because your standard errors are too low, so are your p-values. Now you’re making Type I errors without realizing it.

That’s not good.

A better approach? There are two: Multiple Imputation or Full Information Maximum Likelihood.

I understand your point, but as I see it your critique is not totally valid since it is poised from a point of view of knowledge (about the missing values), which is simply not useful when inputting (the whole issue is that you do not know the missing values).

From the point of view of ignorance (not knowing the missing values), inputting mean values (the mean you DO have!) does not change the regression line (though it can still be “wrong”!). It only decreases the estimators’ standard error (the main issue with mean imputation).

Hi Diogo,

Deleting the data to create the missing data was simply done here to demonstrate the real issue. The fact that I deleted randomly is actually the best case scenario. Usually data are NOT missing that randomly, so ignoring those missing data, or using an approach like mean imputation creates even more bias.

am carrying out a research on mean imputation and random imputation.to know which one is more efficient

any examples of papers that have used mean imputation out of curiosity? It is said many researchers still used this method so I’d be curious to see such examples. Thanks

Hi

This may sound very basic but how do we conduct a mean imputation in spss?

Mar

Hi Mar,

Many procedures have a checkbox, but I have to say, most of the time mean imputation causes more problems than it solves. There are many, many better approaches.

I feel that simply accounting for missing data is a good way to remove bias, or not even have to address it. In my masters thesis, I found out that 4 states in the US didn’t offer any LGBT questions on their versions of the BRFSS CDC data, so I noted that in the footnotes of the table.

I am a firm believer in reporting on the current data as-is.

Hi Abby,

You’re absolutely right that often solutions for missing data cause more bias than they solve. That’s much of the point of this article.

But even reporting on the data as-is is choosing a missing data solution. You’re just choosing what’s called Complete Case Analysis or Listwise Deletion. This solution actually has stronger assumptions than some other solutions to avoid bias. The biggest assumption you’re making here is that the 4 states with no LGBT questions are a random sample of all states. That’s possible.

Regardless, you followed an important practice: reporting what you did to account for missing data. Transparency is key.

First you compute the mean EXCLUDING MV which SPSS handles very well. You can actually ask SPSS to exclude MVs. Lets say you MV is 99, you then replace 99 with computed mean using and if statement; alternatively, you can replace the MVs in your data set using the calculated mean. Hope this helps!

Hi,

It s good explanation. can you tell me about hot deck, cold deck, deductive, cell mean imputations advantage and disadvantage.

Hi Revathi,

There is some, though not detailed, information about these in our webinar Approaches to Missing Data: The Good, the Bad, and the Unthinkable: . The recording is free.