our programs

Whether you need ongoing learning and support, dig deep to learn one statistical method, or get clarity on a specific issue, we’ve got your back. Choose what’s right for you!

what people

what people

are saying

I’m a scientist at a federal lab where we don’t have a statistical consulting group, so just knowing you were there was huge for me!

what people

what people

are saying

Just wanted to thank you for your help in the webinar last month. I had some questions about mean reversion – I seemed to understand it less the more I thought about it. Anyway, we talked it through in the webinar, and it greatly helped my understanding of the topic.

what people

what people

are saying

Yesterday I attended the webinar on GLMM, really nice presentation by Kim. As a statistician, I find it good to see how complicated stuff is presented by others, both in order to learn myself and to learn how to present statistics to non-statisticians.

what people

what people

are saying

Thank you for this answer! It has saved me from many more hours of fruitlessly searching for something I wasn’t even sure how to search for! 😛 I will look into those resources and may consider the bootstrapping method and training after reading more about it.

what people

what people

are saying

I am so glad the Analysis Factor exists. I honestly don’t know how I would get answers to my statistical questions without the program. And it’s always a pleasure to work with all of you.

what people

what people

are saying

What a straightforward solution! I had spent hours trying to fathom it out – thank you!

what people

what people

are saying

I feel that members can feel really relaxed. You don’t have the ‘oh this must be silly, I am sure I should know this’ -type of feeling when posting questions.

what people

what people

are saying

The Analysis Factor has been a wonderful resource that I have greatly appreciated over the past few years, in terms of the wonderful expertise provided and also the positive supportive approach taken by all the trainers (unlike an old undergrad statistics professor I once had who took pleasure in intimidating students). Thanks so much!

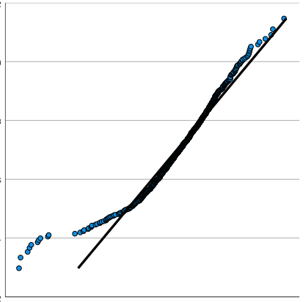

stat skill-building compass

stat skill-building compass