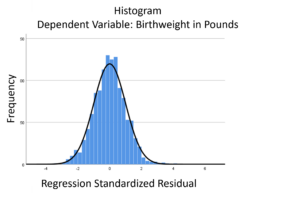

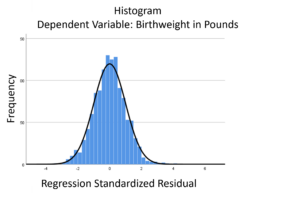

The linear model normality assumption, along with constant variance assumption, is quite robust to departures. That means that even if the  assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

This is great because it gives you a bit of leeway to run linear models, which are intuitive and (relatively) straightforward. This is true for both linear regression and ANOVA.

You do need to check the assumptions anyway, though. You can’t just claim robustness and not check. Why? Because some departures are so far off that the p-values and confidence intervals become inaccurate. And in many cases there are remedial measures you can take to turn non-normal residuals into normal ones.

But sometimes you can’t.

Sometimes it’s because the dependent variable just isn’t appropriate for a linear model. The (more…)

When your dependent variable is not continuous, unbounded, and measured on  an interval or ratio scale, linear models don’t fit. The data just will not meet the assumptions of linear models. But there’s good news, other models exist for many types of dependent variables.

an interval or ratio scale, linear models don’t fit. The data just will not meet the assumptions of linear models. But there’s good news, other models exist for many types of dependent variables.

Today I’m going to go into more detail about 6 common types of dependent variables that are either discrete, bounded, or measured on a nominal or ordinal scale and the tests that work for them instead. Some are all of these.

(more…)

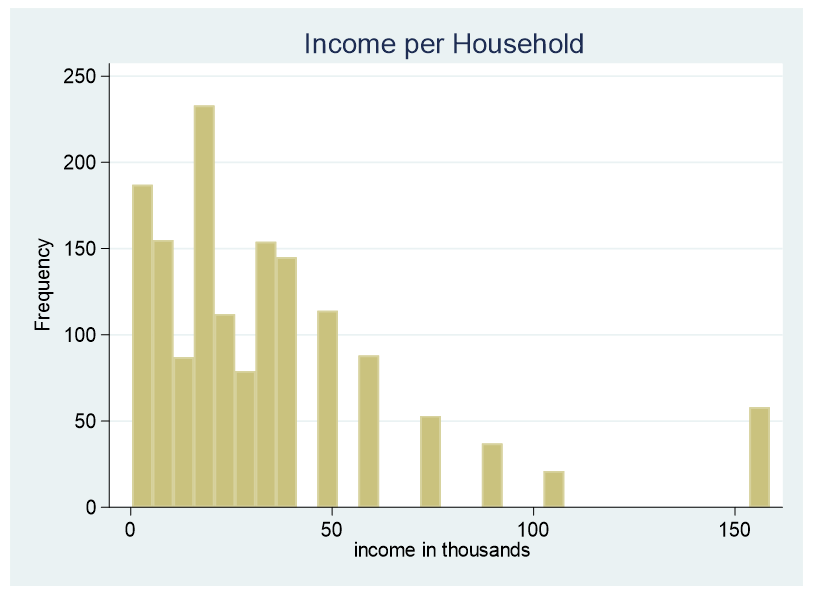

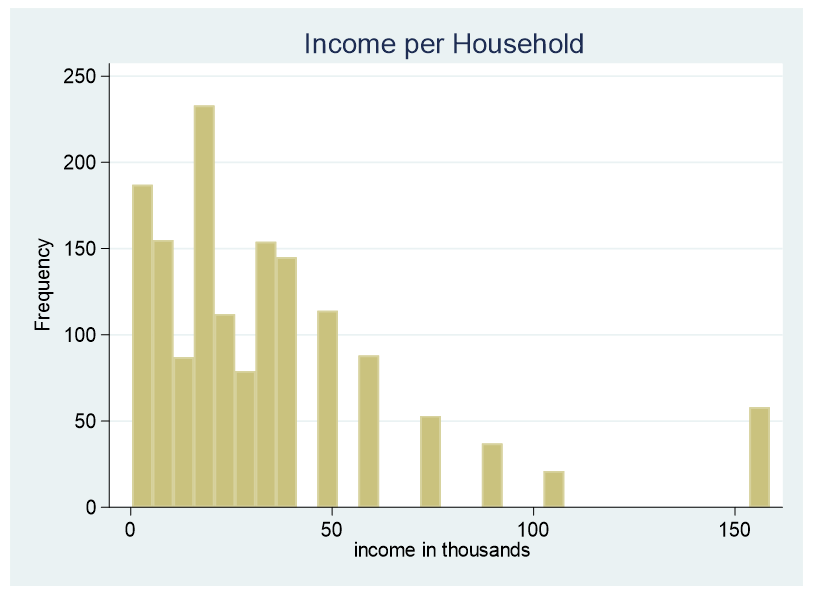

At times it is necessary to convert a continuous predictor into a categorical predictor. For example, income per household is shown below.

This data is censored, all family income above $155,000 is stated as $155,000. A further explanation about censored and truncated data can be found here. It would be incorrect to use this variable as a continuous predictor due to its censoring.

(more…)

by Jeff Meyer

As mentioned in a previous post, there is a significant difference between truncated and censored data.

Truncated data eliminates observations from an analysis based on a maximum and/or minimum value for a variable.

Censored data has limits on the maximum and/or minimum value for a variable but includes all observations in the analysis.

As a result, the models for analysis of these data are different. (more…)

A normally distributed variable can have values without limits in both directions on the number line. While most variables have practical limitations, most of the time, this assumption of infinite tails is quite reasonable as there is no real boundary.

Air temperature is an example of a variable that can extend far from its mean in either direction.

But for other variables, there is a practical beginning or ending point. Age is left-bounded. It starts at zero.

The number of wins that a baseball team can have in a season is bounded on the upper end by the number of games played in a season.

The temperature of water as a liquid is bound on the low end at zero degrees Celsius and on the high end at 100 degrees Celsius.

There are two types of bounded data that have direct implications for how to work with them in analysis: censored and truncated data. Understanding the difference is a critical first step when working with these variables.

Understanding Censored and Truncated Data

Censored Data

Censored data have unknown values beyond a bound on either end of the number line or both. It can exist by design. When the data is observed and reported at the boundary, the researcher has made the decision to restrict the range of the scale.

An example of a lower censoring boundary is the recording of pollutants in our water. The researcher may not care about (or instruments may not be able to detect) the level of pollutants if it falls below a certain threshold (e.g., .005 parts per million). In this case, any pollutant level below .005 ppm is reported as “<.005 ppm.”

An upper censor could be placed on temperature in a science experiment. Once the temperature goes above x degrees the scientist doesn’t care. So s/he measures it as “>x”.

Data can be censored on both ends as well. Income could be reported as “<$20,000” if the actual is below $20,000 and reported as “ >$200,000” if above that level.

There are potential censored data not created by design. Test scores or college admission tests are examples of censored data not created by design, but by the actual bounds. A student cannot score above 100% correct no matter how much better they know the topic than other students. These are bounded by actual results.

Truncated Data

Truncation occurs when values beyond a boundary are either excluded when gathered or excluded when analyzed. For example, if someone conducting a survey asks you if you make more than $100,000, and you answer “yes” and the surveyor says “thanks but no thanks”, then you’ve been truncated.

Or if a number of arrests is measured from police records, then everyone with 0 arrests will, by definition, be excluded from the sample.

Excluding cases from a data set at a preset boundary has the same effect. Creating models on middle income values would involve truncating income above and below specific amounts.

So to summarize, data are censored when we have partial information about the value of a variable—we know it is beyond some boundary, but not how far above or below it.

In contrast, data are truncated when the data set does not include observations in the analysis that are beyond a boundary value. Having a value beyond the boundary eliminates that individual from being in the analysis.

In truncation, it’s not just the variable of interest that we don’t have full data on. It’s all the data from that case.

Jeff Meyer is a statistical consultant with The Analysis Factor, a stats mentor for Statistically Speaking membership, and a workshop instructor. Read more about Jeff here.

Go to the next article or see the full series on Easy-to-Confuse Statistical Concepts

Statistically speaking, when we see a continuous outcome variable we often worry about outliers and how these extreme observations can impact our model.

But have you ever had an outcome variable with no outliers because there was a boundary value at which accurate measurements couldn’t be or weren’t recorded?

Examples include:

- Income data where all values above $100,000 are recorded as $100k or greater

- Soil toxicity ratings where the device cannot measure values below 1 ppm

- Number of arrests where there are no zeros because the data set came from police records where all participants had at least one arrest

These are all examples of data that are truncated or censored. Failing to incorporate the truncation or censoring will result in biased results.

This webinar will discuss what truncated and censored data are and how to identify them.

There are several different models that are used with this type of data. We will go over each model and discuss which type of data is appropriate for each model.

We will then compare the results of models that account for truncated or censored data to those that do not. From this you will see what possible impact the wrong model choice has on the results.

Note: This training is an exclusive benefit to members of the Statistically Speaking Membership Program and part of the Stat’s Amore Trainings Series. Each Stat’s Amore Training is approximately 90 minutes long.

(more…)

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.