If you’ve ever done any sort of repeated measures analysis or mixed models, you’ve probably heard of the unstructured covariance matrix. They can be extremely useful, but they can also blow up a model if not used appropriately. In this article I will investigate some situations when they work well and some when they don’t work at all.

The Unstructured Covariance Matrix

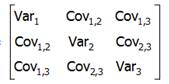

The easiest to understand, but most complex to estimate, type of covariance matrix is called an unstructured matrix. Unstructured means you’re not imposing any constraints on the values. For example, if we had a good theoretical justification that all variances were equal, we could impose that constraint and have to only estimate one variance value for every variance in the table.

The easiest to understand, but most complex to estimate, type of covariance matrix is called an unstructured matrix. Unstructured means you’re not imposing any constraints on the values. For example, if we had a good theoretical justification that all variances were equal, we could impose that constraint and have to only estimate one variance value for every variance in the table.

But in an unstructured covariance matrix there are no constraints. Each variance and each covariance is estimated uniquely from the data. As you can imagine, this results in the best possible model fit, because each variance and covariance values is very close to what the data reflect.

But that comes at a cost that may not be worth the improved fit. Estimating each one of those values can use up many degrees of freedom.

When Unstructured Covariances Don’t Always Work

A common use for a covariance matrix is for the residuals in models that measure repeated measures or longitudinal data. In a marginal model, the Sigma matrix measures the variances and covariances of each subject’s multiple, non-independent residuals.

So for example, consider a repeated measures study where the same subject performs the same task under different experimental conditions. The Sigma matrix contains the residual variance of each condition on the diagonal. Off diagonal, Sigma measures how related those measurements are to each other due to being from the same individual, after taking out the effect of the different experimental conditions.

Many times there are patterns in these variances and covariances. For example, all the variances may be nearly equal, and the covariances may be nearly equal. If that’s true, using a compound symmetry structure, which imposes those constraints, would save you a lot of degrees of freedom with little loss of fit, because you’d only have to estimate one variance and one covariance.

But if there really is no reliable pattern, an unstructured matrix will give you much better fit. I have successfully used unstructured Sigma matrices when repeated measurements were unequally spaced and variances differed a lot, but in no discernible pattern.

Unstructured Sigma matrices don’t work well, however, when there are many repeats and the sample size is not large. If there were 6 observations per subject, Sigma would be a 6×6 matrix. That requires estimating 6 variances and 18 covariances.

That may sound like an unusually large number of repeats, but it happens commonly in 2×3 within-subjects experiments. In a small sample, say 20 participants, you wouldn’t be able to fit an unstructured covariance matrix because you’d need more degrees of freedom than you have in the data.

In the MANOVA approach to repeated measures, an unstructured Sigma matrix is the only option. This is what is used in the multivariate tables of SPSS’s GLM Repeated Measures. The univariate tables use a compound symmetry structure, which is why sometimes you get univariate results, but the multivariate tables are all empty.

This is one advantage to running a marginal model in Mixed procedures instead of GLM–it allows you to choose the structure of the Sigma matrix, and find one that better balances the trade off between model fit and degrees of freedom.

When They Work Well

A different approach to fitting repeated measures as well as other clustered data is to fit a mixed model, a.k.a. a random effects model. These models often use unstructured covariance matrices for the random effects.

In these models, one or more random effects are included in the model. Like a residual, these random effects are each a measure of unexplained variance.

For example, in a basic random slope model for longitudinal data, in addition to the residual variance for responses from the same individual, there are two random effects in the model. These are a random intercept, for which we measure the variance in height of individuals’ trajectories over time, and a random slope, for which we measure the variance in trajectory slopes over time.

It’s useful to summarize the variances of these two effects, and the covariance between them, in a covariance matrix called the G matrix.

Unstructured covariance matrices work very well for G for a few reasons. First, G matrices are generally small, so there aren’t a lot of parameters to estimate. I can’t recall a G matrix that was larger than 3×3, though I suppose it’s theoretically possible. Second, unlike the residuals in a Sigma matrix, the effects in a G matrix are measuring completely different constructs. There is no reason to expect variances to be equal or covariances to display a pattern.

Therefore, unstructured G matrices have the benefit of improved model fit, and little chance of losing too many degrees of freedom. The only other common structure for a G matrix is a variance components structure, which fits different variance estimates, but 0 covariances.

Dear Madam Karen,

Thanks a lot for your commitment and consistent support by sharing your ample experience

Nice post, Karen. FYI, the matrix you called the Sigma matrix is often referred to as the R-matrix. See Lesa Hoffman’s (2015) book, for example, as well as the Stata documentation for -mixed-.

https://www.pilesofvariance.com/

Cheers,

Bruce

Hi Bruce,

Yes, it’s often called that. I’ve seen so many different names for just about everything in mixed models (even the models themselves have a bunch of different names). I’ve seen the G matrix called D also.

so if unstructured does blow up

what is best alternative [one of my 18 test data sets]

in particular what is structure for a. topelitz and b. varaince omponent?

really appreciate help my web searches are not helping

Thank you, Karen! This was so easy to understand! I really appreciate it. I am running analyses with twins nested in families (multilevel), with random intercepts. Using an unstructured covariance matrix would work best, because there may be no pattern in the covariance between twin siblings, correct? I hope I’m interepreting this correctly.

Hi Karen,

Firstly thank you for the clear message. When assessing applicability of correlation matrices, do you prefer to choose based on rationale or goodness of fit? (see Akaike information criterion in GEE by Wei Pan)

Grtz

Katrien