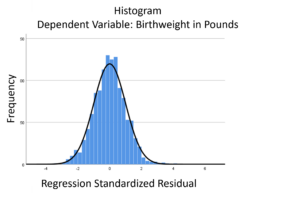

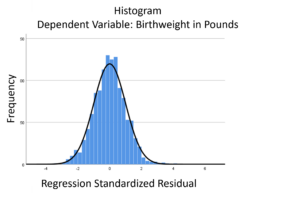

The linear model normality assumption, along with constant variance assumption, is quite robust to departures. That means that even if the  assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

This is great because it gives you a bit of leeway to run linear models, which are intuitive and (relatively) straightforward. This is true for both linear regression and ANOVA.

You do need to check the assumptions anyway, though. You can’t just claim robustness and not check. Why? Because some departures are so far off that the p-values and confidence intervals become inaccurate. And in many cases there are remedial measures you can take to turn non-normal residuals into normal ones.

But sometimes you can’t.

Sometimes it’s because the dependent variable just isn’t appropriate for a linear model. The (more…)

When your dependent variable is not continuous, unbounded, and measured on  an interval or ratio scale, linear models don’t fit. The data just will not meet the assumptions of linear models. But there’s good news, other models exist for many types of dependent variables.

an interval or ratio scale, linear models don’t fit. The data just will not meet the assumptions of linear models. But there’s good news, other models exist for many types of dependent variables.

Today I’m going to go into more detail about 6 common types of dependent variables that are either discrete, bounded, or measured on a nominal or ordinal scale and the tests that work for them instead. Some are all of these.

(more…)

by Jeff Meyer

As mentioned in a previous post, there is a significant difference between truncated and censored data.

Truncated data eliminates observations from an analysis based on a maximum and/or minimum value for a variable.

Censored data has limits on the maximum and/or minimum value for a variable but includes all observations in the analysis.

As a result, the models for analysis of these data are different. (more…)

Imagine this scenario:

This year’s flu strain is very vigorous. The number of people checking in at hospitals is rapidly increasing. Hospitals are desperate to know if they have enough beds to handle those who need their help.

You have been asked to analyze a previous year’s hospitalization length of stay by people with the flu who had been admitted to the hospital. The predictors in your data set are age group, gender and race of those admitted. You also have an indicator that signifies whether the hospital was privately or publicly run.

(more…)

It’s that time of year: flu season.

Let’s imagine you have been asked to determine the factors that will help a hospital determine the length of stay in the intensive care unit (ICU) once a patient is admitted.

The hospital tells you that once the patient is admitted to the ICU, he or she has a day count of one. As soon as they spend 24 hours plus 1 minute, they have stayed an additional day.

Clearly this is count data. There are no fractions, only whole numbers.

To help us explore this analysis, let’s look at real data from the State of Illinois. We know the patients’ ages, gender, race and type of hospital (state vs. private).

A partial frequency distribution looks like this: (more…)

A normally distributed variable can have values without limits in both directions on the number line. While most variables have practical limitations, most of the time, this assumption of infinite tails is quite reasonable as there is no real boundary.

Air temperature is an example of a variable that can extend far from its mean in either direction.

But for other variables, there is a practical beginning or ending point. Age is left-bounded. It starts at zero.

The number of wins that a baseball team can have in a season is bounded on the upper end by the number of games played in a season.

The temperature of water as a liquid is bound on the low end at zero degrees Celsius and on the high end at 100 degrees Celsius.

There are two types of bounded data that have direct implications for how to work with them in analysis: censored and truncated data. Understanding the difference is a critical first step when working with these variables.

Understanding Censored and Truncated Data

Censored Data

Censored data have unknown values beyond a bound on either end of the number line or both. It can exist by design. When the data is observed and reported at the boundary, the researcher has made the decision to restrict the range of the scale.

An example of a lower censoring boundary is the recording of pollutants in our water. The researcher may not care about (or instruments may not be able to detect) the level of pollutants if it falls below a certain threshold (e.g., .005 parts per million). In this case, any pollutant level below .005 ppm is reported as “<.005 ppm.”

An upper censor could be placed on temperature in a science experiment. Once the temperature goes above x degrees the scientist doesn’t care. So s/he measures it as “>x”.

Data can be censored on both ends as well. Income could be reported as “<$20,000” if the actual is below $20,000 and reported as “ >$200,000” if above that level.

There are potential censored data not created by design. Test scores or college admission tests are examples of censored data not created by design, but by the actual bounds. A student cannot score above 100% correct no matter how much better they know the topic than other students. These are bounded by actual results.

Truncated Data

Truncation occurs when values beyond a boundary are either excluded when gathered or excluded when analyzed. For example, if someone conducting a survey asks you if you make more than $100,000, and you answer “yes” and the surveyor says “thanks but no thanks”, then you’ve been truncated.

Or if a number of arrests is measured from police records, then everyone with 0 arrests will, by definition, be excluded from the sample.

Excluding cases from a data set at a preset boundary has the same effect. Creating models on middle income values would involve truncating income above and below specific amounts.

So to summarize, data are censored when we have partial information about the value of a variable—we know it is beyond some boundary, but not how far above or below it.

In contrast, data are truncated when the data set does not include observations in the analysis that are beyond a boundary value. Having a value beyond the boundary eliminates that individual from being in the analysis.

In truncation, it’s not just the variable of interest that we don’t have full data on. It’s all the data from that case.

Jeff Meyer is a statistical consultant with The Analysis Factor, a stats mentor for Statistically Speaking membership, and a workshop instructor. Read more about Jeff here.

Go to the next article or see the full series on Easy-to-Confuse Statistical Concepts

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.