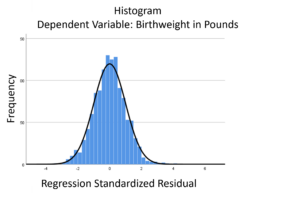

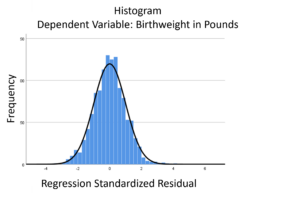

The linear model normality assumption, along with constant variance assumption, is quite robust to departures. That means that even if the  assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

This is great because it gives you a bit of leeway to run linear models, which are intuitive and (relatively) straightforward. This is true for both linear regression and ANOVA.

You do need to check the assumptions anyway, though. You can’t just claim robustness and not check. Why? Because some departures are so far off that the p-values and confidence intervals become inaccurate. And in many cases there are remedial measures you can take to turn non-normal residuals into normal ones.

But sometimes you can’t.

Sometimes it’s because the dependent variable just isn’t appropriate for a linear model. The (more…)

When your dependent variable is not continuous, unbounded, and measured on  an interval or ratio scale, linear models don’t fit. The data just will not meet the assumptions of linear models. But there’s good news, other models exist for many types of dependent variables.

an interval or ratio scale, linear models don’t fit. The data just will not meet the assumptions of linear models. But there’s good news, other models exist for many types of dependent variables.

Today I’m going to go into more detail about 6 common types of dependent variables that are either discrete, bounded, or measured on a nominal or ordinal scale and the tests that work for them instead. Some are all of these.

(more…)

Learning statistics is difficult enough; throw in some especially confusing terminology and it can feel impossible! There are many ways that statistical language can be confusing.

Learning statistics is difficult enough; throw in some especially confusing terminology and it can feel impossible! There are many ways that statistical language can be confusing.

Some terms mean one thing in the English language, but have another (usually more specific) meaning in statistics. (more…)

by Christos Giannoulis

Many data sets contain well over a thousand variables. Such complexity, the speed of contemporary desktop computers, and the ease of use of statistical analysis packages can encourage ill-directed analysis.

It is easy to generate a vast array of poor ‘results’ by throwing everything into your software and waiting to see what turns up. (more…)

One important yet difficult skill in statistics is choosing a type model for different data situations. One key consideration is the dependent variable.

For linear models, the dependent variable doesn’t have to be normally distributed, but it does have to be continuous, unbounded, and measured on an interval or ratio scale.

Percentages don’t fit these criteria. Yes, they’re continuous and ratio scale. The issue is the (more…)

When you put a continuous predictor into a linear regression model, you assume it has a constant relationship with the dependent variable along the predictor’s range. But how can you be certain? What is the best way to measure this?

When you put a continuous predictor into a linear regression model, you assume it has a constant relationship with the dependent variable along the predictor’s range. But how can you be certain? What is the best way to measure this?

And most important, what should you do if it clearly isn’t the case?

Let’s explore a few options for capturing a non-linear relationship between X and Y within a linear regression (yes, really). (more…)

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.

assumptions aren’t met perfectly, the resulting p-values and confidence intervals will still be reasonable estimates.