Principal Component Analysis is really, really useful.

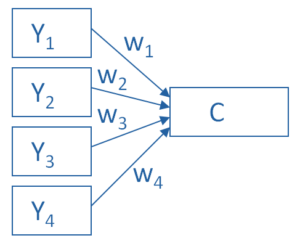

You use it to create a single index variable from a set of correlated variables.

In fact, the very first step in Principal Component Analysis is to create a correlation matrix (a.k.a., a table of bivariate correlations). The rest of the analysis is based on this correlation matrix.

You don’t usually see this step — it happens behind the scenes in your software.

Most PCA procedures calculate that first step using only one type of correlations: Pearson.

And that can be a problem. Pearson correlations assume all variables are normally distributed. That means they have to be truly quantitative, symmetric, and bell shaped.

And unfortunately, many of the variables that we need PCA for aren’t.

Likert Scale Items are Tricky

Likert Scale items are a big one. If you’re not familiar with the name, they’re those scales from 1-5 or 1-9 that have values like “1=Strongly Disagree” and “5=Strongly Agree.”

There has been a lot of debate about treating Likert Scale data as if it were normally distributed. Much of this debate centers on two things:

1. The fact that although Likert items are technically ordered categories, the categories don’t really have qualitative differences.

They’re discrete measurements about an underlying continuum. How strongly you agree with a statement isn’t really categorical — it’s a continuum. We just can’t measure it continuously, so we use discrete categories that map onto that continuum. But it’s the continuum we’re really interested in.

One side of the debate says that the mapping of the discrete ordinal measurements is close enough, and the other says it isn’t.

The close-enough group is especially adamant because…

2. There often isn’t a good alternative that treats the Likert Scale item as ordinal discrete values yet still tests what we want.

Again, one side says that there isn’t an alternative method that does what we need. Therefore, it’s better to use the statistical method that does what we need it to do, even if the data don’t quite fit. It’s close enough.

The other says if the data don’t fit, then it’s not an option.

This is one of those lucky situations where we don’t have to choose or debate.

There is an alternative in PCA that uses Likert data as it is, yet still gives us information about the underlying continuum. (Sound too good to be true? Read on…)

Polychoric Correlations for Ordinal Variables

That alternative is to base the PCA on a different type of correlations: polychoric.

Polychoric correlations assume the variables are ordered measurements of an underlying continuum. (Sounds perfect for Likert items, huh?)

They don’t need to be truly continuous and they don’t need to be normally distributed.

Polychoric correlations are based on maximum likelihood, so they’re not something you could calculate by hand. (I’ve never even seen a formula for them.)

They are interpreted the same way as Pearson correlations. They range from -1 to 1, inclusive, and measure the strength and direction of the association between two variables.

The only trick is that not all software gives you the option to run the PCA on the polychoric correlations in that first step.

Let’s review some options.

A Few Software Options for PCA on Polychoric Correlations

These are in order of increasing complication. (Yup, R is easiest and SPSS is hardest).

R

This is very easy to do in R.

The psych package in R includes polychoric correlations as an option in the fa.poly function.

Stata

In Stata and SAS, it’s a little harder. Both require that you first calculate the polychoric correlation matrix, save it, then use this as input for the principal component analysis.

In Stata, you have to use the user-written command polychoric to even calculate the correlation matrix. (You can find out more about Stata’s user-written commands here.)

Once you’ve got that, use the factormat command to run the factor analysis on this correlation matrix.

SAS

In SAS, you first run the polychoric correlation matrix in Proc Freq, then output it as a data set. The goal is to produce a polychoric correlation matrix as input for Proc Factor instead of the raw data.

(Note: All the major software packages let you base a PCA on a correlation matrix. This is useful even when using Pearson correlations when you have a very large data set.)

However, Proc Freq doesn’t set it up correctly for Proc Factor, so the next step is a data step to set it up.

Once you have things set up correctly, now you can run the PCA in Proc Factor, specifying that the input data set is a correlation matrix.

SPSS

In SPSS, it’s a bit of a mess.

SPSS requires the same 3-step process that SAS does:

- Calculate the polychoric correlation matrix and save it as a data set.

- Clean up that data set so that it is in the exact format needed for the Factor command to read it as a correlation matrix.

- In the syntax only (this doesn’t work in menus), run the PCA on the correlation matrix.

Every one of these steps is a bit, well, temperamental.

I’ve done this before and had the following happen:

- I keep getting error messages, despite the fact that I have successfully run this syntax before on the exact same data set.

- So I shut down SPSS, reopen it, then run the exact same syntax.

- It works.

Sigh.

(Side note: We go through a demonstration of these steps, in detail, in our PCA & EFA workshop. We even warn you about when you may need to restart SPSS.)

But that’s not even the hard part.

As of this writing, SPSS has no direct option to calculate polychoric correlations.

At all.

So either you have to:

- Run your polychoric correlations in another software, export the correlation matrix, then import it as a SPSS data set. Or

- Install the R Essentials HETCOR extention to SPSS, which uses R code to run the polychoric correlations within SPSS. This extention is freely available from IBM DeveloperWorks.If you’re using a very recent version of SPSS this is not hard. Under the Utilities menu, you can install the R extensions. But if you have an older version, installing it is not easy–involving your IT team will be a good idea. But once it is installed, it actually adds an SPSS menu item for HETCOR. It’s pretty cool.

Updated 2/7/2025 for spss options

Hi! I’m very late here, but I saw your response above about being able to do a version of PCA with binary data as well. (Although this does relate to my own data, it’s a general question that I believe will still be useful to others.)

I have a mix of explanatory variables – some are truly numeric, some are ordered factors (currently coded as numeric), and some are binary. Is there any hope of using PCA for this?

I’ve looked into NMDS instead, but it seems much more difficult to interpret the results with NMDS.

Thanks in advance for any help!! I’ve been stuck on this problem for literally years…and unfortunately I do not have the financial means to join Statistically Speaking right now.

Thank you for the article.

In this type of situation, there is also the possibility of running and IRT model, with one or more dimensions (which would be similar to the number of components of the PCA). Do you agree?

Best wishes,

Vanessa

I am the author of the HETCOR procedure. As far as I can see, it works fine in Statistics 26 as long as you have installed R and the R Essentials for that version

(see https://developer.ibm.com/predictiveanalytics/downloads/ for details on that).

Note that the procedure determines what type of correlation to compute for each pair based on the measurement levels set for the variables, so if you want polychoric, make sure that they are declared as ordinal.

The easiest way to install HETCOR once the R Essentials are installed is just to use the Extensions > Extension Hub menu (V23 or later).

The article states that Pearson correlation assumes normality. This is incorrect. Only interval scale is assumed.

Thanks, Jon.

Yeah, I think the tricky part is getting the right versions of R and R essentials installed. If I recall correctly, it’s much easier to do in more recent versions of SPSS.

I’m running SPSS Version 22 and the HETCOR extension no longer works. However, I did find an alternative to calculate the polychoric correlation here.

https://www.ncbi.nlm.nih.gov/pubmed/25123909.

Of course, I still need to format the correlation matrix and run the syntax as Karen mentions above.

Hi Joan,

Interesting. I’m also on 22 and I thought it was still working. I will check that out. I do know you need to have the right version of R installed.

But thanks for that other option. This looks easier to run than getting the HETCOR extension installed. That is not for the faint of heart.

I’m am currently in the process of updating all the material for the PCA and EFA workshop, and I will check this out and possibly incorporate it.

Hi Karen!

When should we use PCA or confirmatory factor analysis for validating a one factor scale with 25-items and 3 response options (0-no, 0,5- partly, 1-yes)?

In that case, polychoric correlation is the most appropriate, right?

Hi Henrique,

Yes, that’s exactly a situation for polychoric correlation. And based on what you’ve said, you’s use factor analysis, not PCA.

Thank you for this great discussion. I’ve “fought the battle” with the use of HETCOR in SPSS and was successfully able to run factor analyses with polychoric and/or tetrachoric matrices after taking your class. In dealing with all of that, I stumbled upon Item Response Theory (IRT) for dichotomous and ordinal scales. Just from a big picture perspective (I suspect this is a big topic), do the topic areas of IRT and the use of PCA with polychoric matrices overlap? For example, are the various IRT procedures a different way of doing the same thing? Thanks so much.

Hi Carrie,

First, congratulations on your successful HETCOR battle and I’m so glad that the workshop was helpful. 🙂

I don’t know a lot about IRT — I have never personally used it. I know it’s used a lot in educational testing, where each item has a “right” answer. I believe it focuses much more on the individual items and has parameters about the “difficulty” of each item and how well it measures the latent ability.

But you’re a Statistically Speaking member, right? It includes a Stat’s Amore training on IRT, which will give you a lot more information. Here’s the description: https://www.theanalysisfactor.com/member-item-response-theory-rasch/

Thank you very much for the article it was useful. I wonder if you could provide any insights regarding analyzing psychometric scales such as the Marlowe-Crowne social desirability scale. I would appreciate it if you could provide anything related to this matter.

Hi Ashraf,

I don’t know that specific scale, but many psychometric scales have likert items and should be treated this way (though most likely with factor analysis, not principal component).

Hello,

I wondered whether using the polychoric correlation matrix would be an appropriate strategy for running an EFA on data that are continuous, but highly skewed (with a mass near 0 or 100)?

Many thanks,

Ruth

Hi Karen,

Thank you. This is helpful.

Can we do PCA for two level (yes/no) ordinal data using this approach? Is this only applicable for multi category (like five category) ordinal data?

Hi Merhawi,

If you have binary variables, you do something similar. Binary data requires tetrachoric correlations. They’re available in the same software and the approach is the same.

Hi, how can i run PCA to compare livelihood diversity strategies?

Hi,

I might be mistaken but the fa.poly() function in R (now called fa()) does not estimate PCA, but FA. Is there an R function that estimates PCA based on polychoric correlations?

Thanks!

dm

The psych package has a polychoric() function that calculates polychoric correlations. You can then use the resulting correlation matrix with the base R function princomp() with the cor argument set to TRUE. For example:

library(psych)

# use lsat6 example data included with psych package

pcor <- polychoric(lsat6)

# the correlation matrix is stored in rho

pc <- princomp(pcor$rho, cor = TRUE)

summary(pc)

loadings(pc)

hi did you ever post an explanation of how to run a pca on the polychoric correlation matrix in spss. Done the polychoric matrix not sure I can do the rest

Thanks

Kamran

Hi Kamran,

It’s very involved, unfortunately, to get it to work in some software. Others it’s easy. We go over this in detail in our PCA workshop.