Effect size statistics are extremely important for interpreting statistical results. The emphasis on reporting them has been a great development over the past decade.

When most journal editors ask for effect size statistics, they mean standardized effect size statistics. That is, effect size statistics that are free of units of measurement.

Examples include correlation coefficients, eta-square, omega-squared, Cohen’s d. There are many others, but these are common.

But they’re not the only kind. And they’re not always the most useful kind.

Unstandardized effect size statistics also measure the size of the effect. They’re in the original units of the variables. Regression coefficients, mean differences, and covariances are all unstandardized.

They’re useful too, especially if the units are meaningful.

But what about odds ratios?

Odds Ratios (OR) are sometimes standardized, but not always.

What makes common effect sizes standardized

Let’s first look at two similar standardized effect size statistics to see why this happens with odds ratios.

Pearson’s correlation coefficient is probably the first standardized effect size statistic you learned, though no one may have called it that.

It describes the strength of the linear relationship between two numerical variables. It is standardized because in its denominator, we divide by the standard deviations of BOTH variables. This removes both variables’ units.

We’re left with a correlation coefficient that ranges from -1 to 1. Once you get used to correlations a bit, they’re not hard to interpret.

Cohen’s d is another common standardized effect size statistic. It’s used to describe the relationship between a categorical predictor and a numerical outcome. We remove the units of Y, the numerical outcome, just like we did in the correlation: divide by its standard deviation.

We don’t need to remove units for X, the binary grouping variable, because grouping variables don’t have units.

Cohen’s d values have a lower limit at 0 and no upper limit. But as you gain experience working with them, you can get an idea of how to interpret how large they are. (Please don’t blindly use Cohen’s original rules-of-thumb: S, M, L. They were never intended to work in all areas of research.)

Why Odds Ratios are sometimes standardized

Odds Ratios work just like Cohen’s d. There is one binary variable, but this time it’s the outcome, Y.

If X is also categorical, the OR will naturally be standardized. There are no units of X or Y.

I’ve seen sources say that OR are standardized for this reason. They assume X is categorical. This might be common in some fields, where there is always a comparison between treatment and control.

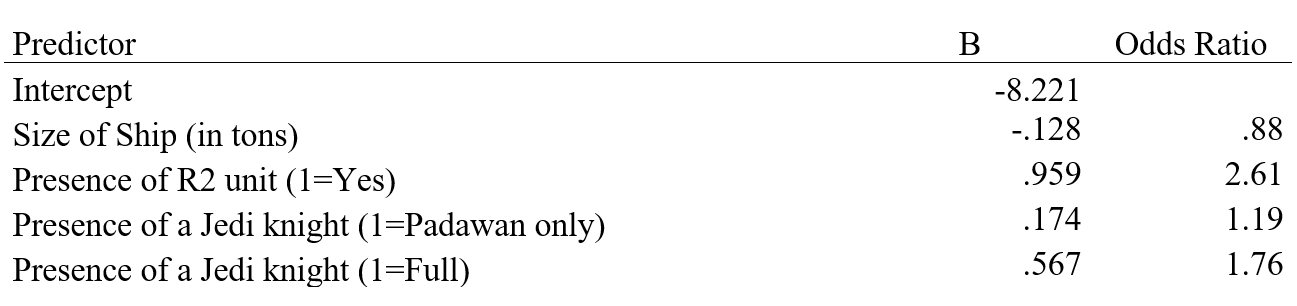

But when X is numerical, the odds ratio will have units by default. I don’t know of any statistical software that will give you a standardized version of an odds ratio.

However, it’s not hard to do yourself. Simply remove the units of any numerical Xs by dividing each X by its standard deviation before putting it into the analysis. None of the resulting odds ratios on your output will have units.

Thanks for this clear and understandable summary! Was hoping to get a source that talks about ORs with binary independent variables being standardized – could you share the source title? Thanks so much!