In the world of data analysis, there’s not always one right statistical analysis for every research question.

In the world of data analysis, there’s not always one right statistical analysis for every research question.

There are so many issues to take into account. They include the research question to be answered, the measurement of the variables, the study design, data limitations and issues, the audience, practical constraints like software availability, and the purpose of the data analysis.

So what do you do when a reviewer rejects your choice of data analysis? And you believe it’s right? This reviewer can be your boss, your dissertation committee, a co-author, the PI, or the ubiquitous journal reviewer #2.

What do you do?

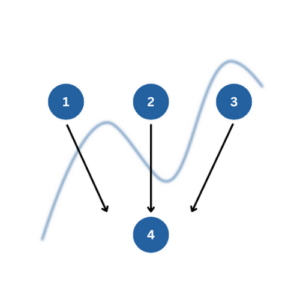

There are ultimately two choices: You can redo the analysis their way. Or you can fight for your analysis. How do you choose?

The one absolute in this choice is that you have to honor the integrity of your data analysis and yourself.

Good science matters.

Do not be persuaded to do an analysis that will produce inaccurate or misleading results. Especially when readers will actually make decisions based on these results.

On the other hand, If no one will ever read your report, this is less crucial.

But even within that absolute, there are often choices. Keep in mind the two goals in data analysis:

- The analysis needs to accurately reflect the limits of the design and the data, while still answering the research question. Assumptions have to be reasonably met.

- The analysis needs to communicate the results to the audience.

When to fight for your analysis

So first and foremost, if your reviewer is asking you to do an analysis that does not appropriately take into account the design or the variables, you need to fight.

For example, a number of years ago I worked with a researcher who had a study with repeated measurements on the same individuals. It had a small sample size and an unequal number of observations on each individual.

It was clear that to take into account the design and the unbalanced data, the appropriate analysis was a linear mixed model.

The researcher’s co-author questioned the use of the linear mixed model, mainly because he wasn’t familiar with it. He thought the researcher was attempting something fishy. His suggestion was to use an ad hoc technique of averaging over the multiple observations for each subject.

This was a situation where fighting was worth it.

Unnecessarily simplifying the analysis to please people who were unfamiliar with an appropriate method was not an option. The simpler model would have violated assumptions.

This was particularly important because the research was being submitted to a high-level journal.

So it was the researcher’s job to educate not only his coauthor, but the readers, in the form of explaining the analysis and its advantages, with citations, right in the paper.

When to jump through hoops

In contrast, sometimes the reviewer is not really asking for a completely different analysis. They just want a similar analysis that reports different specific statistics.

Let’s use another example with repeated measures data.

In this example, the client had a simple (2) pre-post by (3) treatment group design. Time was within subjects and treatment group was between subjects.

I’ve seen it happen many times that the committee or reviewer #2 is familiar with Repeated Measures ANOVA and wants to see the types of statistics that are easy to compute for ordinary least squares (OLS) statistical models.

Examples include a model R2 and effect size statistics like eta2.

This is a situation where it may be easier, and produces no ill-effects, to jump through the hoop.*

Running the analysis they prefer won’t violate any assumptions or produce inaccurate results. This assumes you have no missing data in the design and it’s reasonable to assume sphericity (both of which were true in this case).

If the reviewer can stop your research in its tracks, it may be worth it to rerun the analysis to get the statistics they want to see reported.

You do have to decide whether the cost of jumping through the hoop, in terms of time, money, and emotional energy, is worth it.

If the request is relatively minor, it usually is. If it’s a matter of rerunning every analysis you’ve done to indulge a committee member’s pickiness, it may be worth standing up for yourself and your analysis.

*A note on these examples: there are many other design issues that can make a repeated measures ANOVA not work and a fight worth it. You can read about them here.

How to Fight for your Analysis

When you’re dealing with anonymous reviewers, the situation can get sticky. After all, you cannot ask them to clarify their concerns. And you have limited opportunities to explain the reasons for choosing your analysis.

It may be harder to discern if they are being overly picky, don’t understand the statistics themselves, or have a valid point.

If you choose to stand up for yourself, be well armed. Research the issue until you are absolutely confident in your approach (or until you’re convinced that you were missing something).

A few hours in the library or talking with a trusted expert is never a wasted investment. Compare that to running an unpublishable analysis to please a committee member or coauthor.

Often, the problem is actually not about whether you did the right statistical analysis, but in the way you explained it. It’s your job to explain your analysis with enough detail and the correct wording. You’ll need to include why the analysis is appropriate and, if it’s unfamiliar to readers, what it does.

Rewrite that section, making it very clear. Ask someone to review it. Cite other research that uses or explains that statistical method.

Whatever you choose, be confident that you made the right decision, then move on.

I really interested in it.

So, why the platform is ready for online course and tutorials on the subject.

The issue raised here is actually powerful and very important. I really appreciate. By saying this I’ve one more difficulties in identifying the right variables to run a Value Chain Analysis in a certain agricultural production to market areas. So, what kind of variable really I should collect? And by which data gathering tool shall employee?

Thanks. So I’d have to hear way more information about your research and analysis to be able to help at all. And even then, we can ask you a lot of questions that help you figure out what variable to collect, but we can’t tell you.

You might want to look into our Statistically Speaking program: https://www.theanalysisfactor.com/membership-open/

Hello Karen. Thanks for addressing this common problem. I agree with most of what you wrote, but this statement about factor analysis caught my eye:

“For example a simple confirmatory factor analysis can be run in standard statistical software like SAS, SPSS, or Stata using a factor analysis command. Or it can be run it in structural equation modeling software like Amos or MPlus or using an SEM command in standard software.”

That statement seems at odds with this IBM TechNote:

https://www.ibm.com/support/pages/confirmatory-factor-analysis-cfa-spss-factor

It mentions multiple group factor analysis, and describes it as “an [older] approach to CFA that could be addressed in part through SPSS”. But for CFA in the way it is typically done now, SPSS users are advised to use AMOS.

Can you direct me to any worked examples of CFA done using a command designed for EFA?

Thanks.

Hi Bruce,

Thanks. Yes, I do agree with IBM here. The key difference is that in standard FA procedures (that are built for EFA), the most you can specify is the number of factors. SEM software, like AMOS, allows you to also specify which variables load on each of those factors. Sometimes with clear factor structure, you’ll get the same results, but I’m sure there are situations where you won’t.

Perhaps this isn’t the best example, and I’ll put in a better one. I actually try to think I already have one. 🙂

Thank you so much

Great article Karen!

I can’t emphasize enough the need to arm oneself with citations and previous literature that support your way of doing things. Statistics can be quite subjective and even sources differ on many things such as sample sizes, multiplicity adjustments, and much more. I always say there is no better recourse than a good resource! (OK, I don’t always say that, I just made it up. But I’m going to start saying that :).

Your information is always useful and user-friendly. Thanks again for your well-informed and enlightened insights!

I totally agree. Amazing article. Thank you!