As mixed models are becoming more widespread, there is a lot of confusion about when to use these more flexible but complicated models and when to use the much simpler and easier-to-understand repeated measures ANOVA.

As mixed models are becoming more widespread, there is a lot of confusion about when to use these more flexible but complicated models and when to use the much simpler and easier-to-understand repeated measures ANOVA.

One thing that makes the decision harder is sometimes the results are exactly the same from the two models and sometimes the results are vastly different.

In many ways, repeated measures ANOVA is antiquated — it’s never better or more accurate than mixed models. That said, it’s a lot simpler.

As a general rule, you should use the simplest analysis that gives accurate results and answers the research question. I almost never use repeated measures ANOVA in practice, because it’s rare to find an analysis where the flexibility of mixed models isn’t an advantage in either giving accurate results or answering a more sophisticated research question.

But they do exist.

Here are some guidelines on similarities and differences:

1. Simple design, complete data, normal residuals

If the design is very simple and there are no missing data, you will very likely get identical results from Repeated Measures ANOVA and a Linear Mixed Model. By simple, I mean something like a pre-post design (with only two repeats) or an experiment with one between-subjects factor and another within-subjects factor.

If that’s the case, Repeated Measures ANOVA is usually fine.

The flexibility of mixed models becomes more advantageous the more complicated the design.

2. Non-normal residuals

Both models assume that the dependent variable is continuous, unbounded, and measured on an interval or ratio scale and that residuals are normally distributed.

There are, however, generalized linear mixed models that work for other types of dependent variables: categorical, ordinal, discrete counts, etc. So if you have one of these outcomes, ANOVA is not an option. There is no Repeated Measures ANOVA equivalent for count or logistic regression models.

(There are GEE models, but they are closer in many ways to mixed in terms of setting up data, estimation, and how you measure model fit. You can’t calculate sums of squares by hand, for example, the way you can in Repeated Measures ANOVA).

3. Clustering

In many designs, there is a repeated measure over time (or space), but subjects are also clustered in some other grouping. Students within classroom, patients within hospital, plants within ponds, streams within watersheds, are all common examples.

A repeated measures ANOVA can’t incorporate this extra clustering of subjects in some other clustering, but mixed models can. (In fact, this kind of clustering can get quite complicated.)

4. Missing Data

As implied above, mixed models do a much better job of handling missing data. Repeated measures ANOVA can only use listwise deletion, which can cause bias and reduce power substantially. So use it only when missing data is minimal.

5. Time as Continuous

Repeated measures ANOVA can only treat a repeat as a categorical factor. In other words, if measurements are made repeatedly over time and you want to treat time as continuous, you can’t do that in Repeated Measures ANOVA.

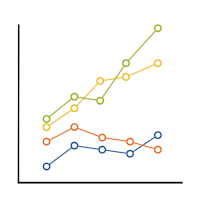

For example, let’s say you’re measuring anxiety level during weeks 1, 2, 4, 8, and 16 of an anxiety-reduction intervention. In mixed models you have the choice to treat those 5 time points as either 5 discrete categories or as true numbers, which accounts for the different spacing of the weeks.

Repeated Measures ANOVA can only do the former.

6. Differing number of repeats

Repeated measures ANOVA falls apart when repeats are unbalanced, which is very common in observed data.

A common study is to record some repeated behavior for individuals, then compare some aspect of that behavior under different conditions.

I’ve seen this kind of study in many fields. One compared the diameter of four species of oak trees at shoulder height in areas that were and were not exposed to an invasive pest. Because those trees were observed, not planted, there was a different number of each species in each plot. So once again, some plots had many repeated data points for each species, while others had only a few.

Repeated measures ANOVA can’t incorporate the fact that each plot has a different number of each type of species. It can only use one measurement for each type.

The traditional way of dealing with this is to average multiple measures for each type, so that each plot has one averaged value for each species in each plot. The problem with this is it under-represents the true variability in the data. (This is bad). Those averages aren’t real data points — they’re averages with variability around them.

Mixed models can account for this variability and the imbalance with no problems.

Conclusion

There are other differences, of course, but some of those get quite involved.

So what it really comes down to is Repeated Measures ANOVA is a fine tool for some very specific situations. Once you deviate from those, trying to use it is like sticking that square peg through the round hole.

You might get it through, but you’ll mangle your peg in the process.

Go to the next article or see the full series on Easy-to-Confuse Statistical Concepts

Hello,

This is very useful and I thank you for providing such information. I just wanted to know if you could give me some references so I could go through more details about these differences. Thank you in advance.

I have a question: I have worked on EAE (animal model of multiple sclerosis). I have five groups(control, shame, 3 treated groups) and I evaluate clinical score of each groups in nine time points. When I wanted to use repeated measure ANOVA, the distribution of data was not normal. Transformation of data cannot fix problems and I can’t use Friedman test because I want to show the difference between groups during times.

You can help me what am I do?

Hi Yahya,

I could, but I’d have to ask you a lot of questions, particularly about how your residuals are distributed and about your DV. See these:

https://www.theanalysisfactor.com/when-dependent-variables-are-not-fit-for-glm-now-what/

https://www.theanalysisfactor.com/extensions-general-linear-model/

Is there a difference in the way that ANOVA and mixed linear models handles shared variance/collinearity? And in a related question, is one approach better for handling lots (5-10) of independent variables?

No on the multicollinearity, as that is just a function of the predictors.

And maybe. RM ANOVA treats every IV as categorical and will put in all possible interactions. I don’t think this is part of the theory of RM ANOVA, but the software procedures I’ve worked with all do this. If you have 10 predictors, you’re going to want to choose which interactions you want. That would be a mess to have every possible interaction.

Hi,

Very nice explanation. I have a doubt that my dependent variable is ordinal. I want to run a repeated measure LMM.. is it possible? And how can I defend my selection of LMM to the jury? Can you help me with more material on LMM for consumer behavior studies..It will be a great help

Hi

Thank you for this explanation.

but if u can compared between GEE and Mixed model for cluster design.

I don’t get the argument for why “clustering” can’t be accommodated in a repeated measures ANOVA–typically implemented as a general linear model–that contains some repeated-measures factors and some between-subject factors.

Example:

There are 50 students in Class A and 50 in Class B. Each student takes a mid-term and a final exam. The design is a 2 (class: A, B) by 2 (exam: mid-term. final) mixed factorial with class (A or B) varying between subjects and exam (mid-term or final) varying within subjects. Most software packages support running this as a repeated measures ANOVA, using a general linear model algorithm. The “clustering” of students within classes isn’t a problem for the GLM.

3. Clustering

In many designs, there is a repeated measure over time (or space), but subjects are also clustered in some other grouping. Students within classroom, patients within hospital, plants within ponds, streams within watersheds, are all common examples.

RE: “A repeated measures ANOVA can’t incorporate this extra clustering of subjects in some other clustering, but mixed models can.”

RA, it works in that example only because you used Class as a factor in the model and class only had a few values. In other words, you have to test the effect of Class differences.

But what if you have students clustered into 30 classes instead of 2? Or 300? Class is simply a blocking variable. You don’t really care about testing for class differences, but you need to control for it.

Hi, thanks for the great explanations!

I have a question though, you mentioned that averaging may under-represent the data variability. In most of the experiments, subjects have to do multiple trials of one condition, for stabilizing the results I think. For each condition, the subject’s responses are averaged for all the trials, by doing that, are we also under-represent the variation too? If also, then how should we deal with it? By putting each trial in the mixed model?

Hi Carol,

Yes, exactly. If you just account for it in the mixed model, you can account for the variability around the per-person-per-condition mean and still test effects of the treatments and other predictors on those means.

Hi Karen, thank you for your comprehensive explanation. I have used mixed linear modelling for a study and now I have to defend it. I used it as mixed models deals better with missing data AND because I have multiple trials in one condition. However, for my defense I need to know HOW the model deals with missing data, and how it effects power. Could you provide some information on that or do you have a suggestion for reading?

kind regards

Lotte

Hi Lotte,

I have assembled a number of good resources on this page: https://www.theanalysisfactor.com/resources/by-topic/missing-data/

thank you

I found this text very very good and it is so so useful to every body. i enjoyed it

thanks a lot again