At The Analysis Factor, we are on a mission to help researchers improve their statistical skills so they can do amazing research.

We all tend to think of “Statistical Analysis” as one big skill, but it’s not.

Over the years of training, coaching, and mentoring data analysts at all stages, I’ve realized there are four fundamental stages of statistical skill:

Stage 3: Extensions of Linear Models

Stage 4: Advanced Models

There is also a stage beyond these where the mathematical statisticians dwell. But that stage is required for such a tiny fraction of data analysis projects, we’re going to ignore that one for now.

If you try to master the skill of “statistical analysis” as a whole, it’s going to be overwhelming.

And honestly, you’ll never finish. It’s too big of a field.

But if you can work through these stages, you’ll find you can learn and do just about any statistical analysis you need to.

Two important points:

- You will not be able to work your way through these stages in the week you have left to finish your dissertation or submit your conference abstract. It takes years.

- You don’t need to be a statistician to do this. Yes, you’ll have to learn some statistics. Yes, it takes work. But any researcher who runs their own data analysis needs statistical skills. Here’s your roadmap to hone them.

The Three Components at Each Stage

If you were only interested in learning statistics as an intellectual exercise, you could work through these stages simply by increasing your statistical knowledge.

This is what classes do.

But we data analysts have two more things to master at each stage: data analysis skills and software skills.

Both are vitally important. It’s very common for someone’s knowledge to be a bit ahead of their data analysis and software skills, simply because they took a lot of stats classes.

The Ideal Way to Progress Through the Stages

In an ideal world, your first data analysis project or two would be simple–at the most fundamental stage. It would involve a one-way ANOVA or perhaps some non-parametric tests.

You would have already taken a couple statistics classes and have some solid background knowledge. And you have an accessible mentor who is knowledgeable, patient, and and available to answer your questions when they come up.

You would learn a lot of data analysis skills on that project: setting up the data set, running tests, checking assumptions. You would also learn how to do all of this in the software of your choice. You’d gain experience in interpreting confidence intervals and calculating sample sizes.

You are now ready to move on to Stage 2. In your next project you could tackle some complicated linear models, like one with polynomial effects or multicollinearity.

Only after that project is done (and ideally a few more of this type) do you tackle a logistic regression model at Stage 3.

Here’s the thing.

I’ve never seen this play out in reality.

Most of the time, your very first study requires logistic regression. Oh, yeah, and a principal component analysis on the predictors to deal with the multicollinearity.

If you’re really piling it on, there are repeated measures.

So you may jump right up to Stage 3 or 4 and while you’re there trying to figure out odds ratios and maximum likelihood, you’re also struggling with getting the data set up correctly in your software (Stage 1) and figuring out the best way to build the model (Stage 2).

The other reality we’re working with here is that unless data analysis is your full time job, you can go months or years in between projects. So even if you’ve gotten pretty skilled on one project, there’s a bit of forgetting going on before the next.

The Real Way to Progress Through the Stages

Since that direct, uninterrupted path is not going to happen in this life, you’re going to have to hop around a bit.

Yes, the more you can do it by starting at the bottom and working your way up the stages, the easier it will be.

But realistically, you may have to jump ahead then back down again to fill in some holes in your skills and knowledge.

It’s like dancing a statistical-skill Time Warp.

The limitation is that it it nearly impossible to jump two stages. You might be able to jump from Level 2 to 3 with a bit of work, but jumping up from 2 to 4 will require a LOT of time, guidance, and work.

We set up our workshops to give you the soup-to-nuts-knowledge, practice, and guidance to progress toward the next stage through in-depth learning on one statistical method. Most of these are at stages 2-4.

And we set up our Statistically Speaking membership to help you in a few ways that no one else is doing:

- Help you fill in some holes in your knowledge at lower stages

- Introduce you to statistical methods higher up that could be useful to you that you may not even realize exist

- Give you ongoing access to professional statistical consultants so you get help and guidance as you’re learning and gaining experience

- Discuss “how to approach data analysis” issues that cross stages

Okay, so the basic strategy is to:

- Work your way up the stages in order as much as you are able

- When you find you’re straddling stages, go back and learn (or re-learn) prior-stage concepts or attain skills you may be missing

- Get help wherever you are

- Try not to jump too many stages at once

Okay, so what are these stages and how do we navigate them? Here’s an overview of each one:

![]()

Stage 1: The Fundamentals

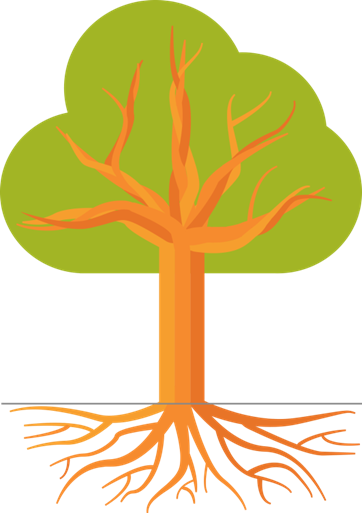

Stage 1 is the roots of our Statistical Skill tree. Your skills here are your anchor, your foundation.

Even though this is the beginning, the fundamentals aren’t easy. In fact, there are some weirdly abstract concepts here that stymie really smart people.

The Stage 1 Statistical Knowledge Component

Knowledge focuses on the concepts and vocabulary of probability, statistics, and data analysis. It includes pretty much everything you learned in a comprehensive Intro to Statistics course: sampling, descriptive and inferential statistics, hypothesis testing, and a lot of other basics.

The high end of this stage takes you through basic linear regression and ANOVA.

It usually requires 1-2 statistics classes to master the statistical knowledge in this stage.

The Stage 1 Data Analysis Skills Component

At this stage, you need to get started with the applied skills of data analysis. These include planning an analysis, doing the steps of analysis in the most efficient order, setting up and coding data, and presenting results through graphs, tables, and a clear and thorough report.

The Stage 1 Software Skills Component

Mastery of software usually includes a good working ability to enter and manipulate data; to define and work with variables in order to run the analysis; and run descriptive and inferential statistics.

This is harder than it sounds, but a good introductory software tutorial will be invaluable.

Stage 1 Wrap up

To master Stage 1, a researcher needs experience with running the data analysis for a few research projects—an honor’s or master’s thesis is usually the first.

This is when the Stage 1 statistical knowledge really starts to make sense, and you can make real progress on using software and learning how to conduct a data analysis.

Stage 2: Linear Modeling

Stage 2 makes up the trunk of our tree. The skills are still quite concentrated. They grow out of the roots and they hold up everything above. They need to be strong and healthy.

This stage is pretty big.

When you transition to Stage 2, there is a qualitative shift in your skills that will be the foundation for everything else.

First, we move beyond statistical tests and begin statistical modeling. It’s a subtle shift, but there are skills and ways of approaching an analysis that differ between tests and models.

Second, pretty much everything you learn at this point forward requires modeling. So you’re not just improving your skills by making this transition to modeling, you’re building a solid foundation.

To truly master this stage means a thorough understanding of how ANOVA and regression fit together into the General Linear Model, and an ability to fluently move from one to the other.

The other piece that is hugely important is a set of skills I call data analysis skills.

These are the skills that demand experience.

The Stage Two Data Analysis Skills Component

These aren’t very different that the data analysis skills in Stage 1, but here they get more complicated.

- Planning the data analysis

- Preparing the data

- Running the data analysis in a logical order

- Presenting and communicating results

The Stage Two Software Skills Component

Every skill and step in the statistical and data analysis components need to be implemented in software. At this stage, I recommend learning one software.

Aim to be a whiz in that software.

Many people will tell you that a particular software is better than others, but I disagree. There is a lot that goes into the choice of statistical software.

It’s most important to commit to one and become great at it.

At this stage, you must be using syntax, not menus, so that your analysis is reproducible.

Stage 2 Wrap-up

Phew, that’s a big stage. Many people never need anything else.

If you’re not quite here, mastering this stage will get you very far in statistical analysis.

If you’re moving beyond this stage, however, realize that this is a broad set of skills. It’s very, very common to have gaps or holes at Stage 2. It’s hard not to unless you’ve worked on many dozens of models.

So if you’ve found you are working on some analysis in Stage 3 or 4 and you’re getting stuck on something here, just jump back and strengthen that foundation.

Twenty or thirty years ago, most researchers could stop here. Not anymore.

With the enormous capacity of computing power has come the availability of increasingly sophisticated statistical methods. These methods account for issues that we previously had to gloss over with linear models.

Because they now have easy availability in software, journal editors and grant issuers no longer let you get away with glossing anything over.

Nor does your conscientiousness about doing great data analysis.

Which is why most data analysts need to tackle some topics in Stage 3.

Stage 3: Extensions of Linear Models

Stage 3 is has a different structure than the first two stages.

Now we branch out.

Every data analyst needs to know pretty much everything in Stages 1 and 2. Sure, if you never analyze experimental data, you may not need to know advanced ANOVA options, but for the most part, Stages 1 and 2 are a base that everyone needs.

Stage 3 branches. Each statistical method in Stage 3 is based on linear models and on the fundamental statistical concepts of Stage 1. None of them require any other branches, just a strong trunk and roots.

Few data analysts need every method in Stage 3.

Yes, you should know they exist in case you come across a new statistical problem. But at Stage 3, you’re picking a single statistical topic and going deep to learn just that one.

Then if you need another, you go deep on that one next.

The key attribute that puts a method at Stage 3 is that it’s one step beyond linear models. For each one you must understand and have the skills to perform a linear model. But that’s the only prerequisite.

There is no order to the Stage 3 topics. You can go right from Stage 2 to logistic regression, to linear mixed models, or to factor analysis.

There are a whole host of statistical methods that are either extensions of linear models or simply based on regression at their core.

So Stage 3 is primarily about going deep into learning statistical models in addition to linear models.

Stage 3 Data Analysis and Software Skills Components

All the data analysis and software skills you developed in Stage 2 are needed here too. The only new thing you learn is how to apply those skills to the specific Stage 3 model you’re working on and how those differ from Linear Models.

Stage 3 Wrap-up

So these are relatively minor, compared to what you needed to learn at Stage 2.

The one new software skill I recommend here is to pick up a second statistical package. You can still think of it as a secondary package that you only use in a pinch, but it’s really, really helpful to have the skills to use two different packages.

Stage 4: Advanced Models

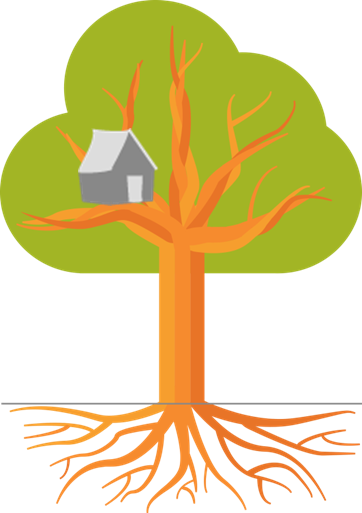

Stage 4 is the treehouse. It rests on multiple branches and is pretty high up there.

These may or may not be hard in a high-level-math sort of way. Some definitely are.

But more often they require understanding two or more of the methods and concepts at Stage 3. Some mix together two methods at Stage 3. Others are just super-specialized esoteric specialties of something at stage 3 that requires statistical theory.

These are topics like zero-inflated models and generalized linear mixed models. It’s hard to jump right from linear models to one of these without first learning something at Stage 3: generalized linear models and/or linear mixed models.

Stage 4 Wrap-up

So first of all, if you find you need to learn something at Stage 4, realize that you’re doing hard stuff.

It’s not you.

But you can do it if you’ve got solid experience and knowledge at stages 2 and 3. If you don’t, go back and fill in those holes.

If you have a deadline and don’t have time to fill the holes, get some help. That’s what we’re here for.

Thanks a lot for your consistent scholarly support and well received.

This is how we should learn and teach Biostatistics!Pretty simple approach and; helpful! Thanks@theanalysisfactor

Thanks for this informatie info. By the way, can one do a Logistic analysis in the same paper with linear regression?

This is very helpful. Thank You.

very interesting way of understanding analysis and keep it up!