One of those tricky, but necessary, concepts in statistics is the difference between crossed and nested factors.

As a reminder, a factor is any categorical independent variable. In experiments, or any randomized designs, these factors are often manipulated. Experimental manipulations (like Treatment vs. Control) are factors.

Observational categorical predictors, such as gender, time point, poverty status, etc., are also factors. Whether the factor is observational or manipulated won’t affect the analysis, but it will affect the conclusions you draw from the results.

(more…)

There are not a lot of statistical methods designed just to analyze ordinal variables.

But that doesn’t mean that you’re stuck with few options. There are more than you’d think.

Some are better than others, but it depends on the situation and research questions.

Here are five options when your dependent variable is ordinal.

(more…)

Are you learning Multilevel Models? Do you feel ready? Or in over your head?

It’s a very common analysis to need to use. I have to say, learning it is not so easy on your own. The concepts of random effects are hard to wrap your head around and there is a ton of new vocabulary and notation. Sadly, this vocabulary and notation is not consistent across articles, books, and software, so you end up having to do a lot of translating.

(more…)

Standard deviation and standard error are statistical concepts you probably learned well enough in Intro Stats to pass the test. Conceptually, you understand them, yet the difference doesn’t make a whole lot of intuitive sense.

So in this article, let’s explore the difference between the two. We will look at an example, in the hopes of making these concepts more intuitive. You’ll also see why sample size has a big effect on standard error. (more…)

When you hear about multilevel models or mixed models, you very often think of a nested design. Level 1 units  nested in Level 2 units, which are in turn possibly nested in Level 3 units. But these variables that define the units and that become random factors in the model can, in fact, be crossed with each other, not nested.

nested in Level 2 units, which are in turn possibly nested in Level 3 units. But these variables that define the units and that become random factors in the model can, in fact, be crossed with each other, not nested.

(more…)

Effect size statistics are all the rage these days.

Journal editors are demanding them. Committees won’t pass dissertations without them.

But the reason to compute them is not just that someone wants them — they can truly help you understand your data analysis.

What Is an Effect Size Statistic?

And yes, these definitely qualify. But the concept of an effect size statistic is actually much broader. Here’s a description from a nice article on effect size statistics:

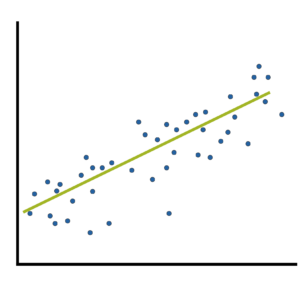

If you think about it, many familiar statistics fit this description. Regression coefficients give information about the magnitude and direction of the relationship between two variables. So do correlation coefficients. (more…)