Mixed models are hard.

They’re abstract, they’re a little weird, and there is not a common vocabulary or notation for them.

But they’re also extremely important to understand because many data sets require their use.

Repeated measures ANOVA has too many limitations. It just doesn’t cut it any more.

One of the most difficult parts of fitting mixed models is figuring out which random effects to include in a model. And that’s hard to do if you don’t really understand what a random effect is or how it differs from a fixed effect.

I have found one issue particularly pervasive in making this even more confusing than it has to be. People in the know use the terms “random effects” and “random factors” interchangeably.

But they’re different.

This difference is probably not something you’ve thought about. But it’s impossible to really understand random effects if you can’t separate out these two concepts.

Here’s the basic idea:

· A factor is a variable.

· An effect is the variable’s coefficient.

Let’s unpack that so it’s meaningful.

When we’re talking about fixed factors and their effects, this doesn’t usually come up. We’re able to see easily the difference between the variables themselves and those variables’ effects.

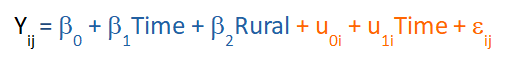

Here’s an example of a Linear Mixed Model that is predicting an outcome Y (Number of Jobs, in Thousands) over Time (5 decades, coded 0 to 4) for a set of counties. Each county is either Rural or Non-rural and is measured across the 5 decades.

To make it easy to see, the fixed part of the model is in blue and the random part of the model is in orange.

It’s very clear that Time and Rural are both fixed predictor variables in this model and that β1 and β2 are their coefficients.

Just like in any regression model, those coefficients are called slopes and that is how we measure the effect of each predictor. We have one additional fixed effect in the model, the intercept β0. The intercept simply reports the mean of Y when all predictors are 0.

So just to be clear, in the fixed part of the model, we have:

· three fixed effects: β0, β1, β2

· two fixed variables: Time Rural

One of these variables, Rural, is a factor because it’s categorical. The other, Time, is a covariate because it’s numerical. (Some people use the term covariate to mean a control variable, not a numerical predictor. That’s not how I’m using it here).

This part is also simple because of the way we specify it in the software. Regardless of which software we use, all we have to do is specify which predictors we want in the fixed part of the model and the software will automatically estimate their coefficients.

If we wanted also to add in, say an interaction term between Rural and Time, we also just add that to the model and the software estimates a coefficient for that too.

But what about the random part of the model, in orange?

This part is a little harder, partly because of the notation, partly because of the way we specify it in the software, and partly because of the wording we use.

In the random part of the model, there is one random factor, two random effects, and the residual.

I suspect you’re familiar with residuals from linear models. Let’s focus instead on the two random terms.

Just like each fixed term in the model, each random term is made up of a random factor and a random effect. The random effects aren’t hard to see: Those are u0 the random intercept, and u1 the random slope over time.

There is also a random factor here: County. It doesn’t look like it’s here, but it is.

We use the term “random factor” and not “random variable” because random variables in a mixed model MUST be categorical. They are never covariates.

County is denoted in the model by the subscript i. You’ll notice that all the random terms in the model have an i subscript but none of the fixed terms do.

That’s because the fixed terms average over all the counties, but the random terms are per county.

We could rewrite the random terms like this: u0County and u1Time*County.

That random intercept term, u0i has both an intercept coefficient and a factor: County.

In statistical software, you have to specify both, but it doesn’t look like it. You’ll specify that you want a random intercept, but County is specified as the “subject.”

Likewise, u1iTime is a slope coefficient across Time for county i. Time itself is NOT a random factor. County is.

So again, when you specify it in the software, County is specified as the subject and Time is the only “variable” you’re putting in as a random effect.

It makes it look like Time is a random factor, but it’s not. You’re fitting a slope across Time for each county. This is equivalent to fitting a Time*County interaction and u1 is the interaction effect.

So again, to summarize, in the random part of the model, we have:

· Two random effects: u0 and u1 for Time

· And one random variable: County

Calling County or Time a random effect is not just technically incorrect, but it makes it much harder to conceptualize what each of the real random effects is actually measuring.

Go to the next article or see the full series on Easy-to-Confuse Statistical Concepts

Thank you its a very useful.

Many thanks for this clarification.

Your post is very useful for me. Thank you very much!

This is still confusing, so you are saying what you have called the coefficients are the parameters of the model. Is this an accepted definition now?

Another issue that has confused me is to do with the term” VARIATE “. I can recall reading many years ago in one of R A Fishers papers, where he used the term VARIATE to mean the actual values that a particular random variable takes on, not the variable itself. Quite a few text books written around the 60’s used this convention and then for some reason it changed. When I asked the question in more modern times what is a Variate? All I got was handwaving answers. It seems that really the term Variate is really another term for a random variable..

Note if we have a statistical experiment where we have two variables, it must be that the dependent variable Y is truly a random variable ( Variate) but it is possible that the predictor variable may be fixed. This means we can actually control the predictor variable. Thus meaning if we repeat the experiment we can actually set the values of the predictor variable. This is done in many experiments in the physical sciences, where you can control for example the voltage across a resistor to a high degree of precision but the current measured is random.

This example could be called a UNIVARITE experiment. So what happens when both the dependent variable and the predictor variables are both random. Then I guess we have the situation where this is called BIVARIATE.

Now I notice you have used the term “COVARIATES”, here again is a confusing term. At the moment I have been trying to read the book “Generalized Linear Models” second edition by P.McCullagh and J A Nelder by the way the term Generalized Linear Models (GLM) has the same acronym as the General Linear Model bringing in another level of confusing poor students. Back to the term COVARIATE on page 23 of the book it defines them as the predictor variables encompassing both numeric and categorical variables.

What do we even mean by linear ? Well even this is confusing for a newbie, it is not linear in the predictor variables but linear in the parameters.

I have been reading a 1922 paper by Fisher who’s main purpose was to bring clearly defined terminology into this discipline of statistical science well 100 years later it still is a mess.

By the way this is not a criticism of this article it is great that this is being aired but how I wish we had really clearly defined terminology like in Pure Mathematics.

Perhaps that is asking too much.

Hi Alan,

Yes, I agree the vocabulary in statistics is a real problem. I’ve written quite a bit on this, including some of the terms you mentioned, like GLM and covariate. See:

https://www.theanalysisfactor.com/statistics-terminology-especially-confusing/

https://www.theanalysisfactor.com/series-on-confusing-statistical-terms/

I’ve actually never heard the term VARIATE used by itself, so perhaps that is simply not used any more.

And yes, you’re spot on that only Y, the response variable, is assumed to be a random variable. Xs are fixed (unless you’ve got a mixed model). The exception is called Major Axis regression or Model II or Type II regression. (This is not, of course, to be confused with Type II errors or Type II sums of squares, both of which are part of regular regression). 🙂

https://www.mbari.org/introduction-to-model-i-and-model-ii-linear-regressions/

Yes, it’s a real problem.

The posts on this site are very very useful for me. Thank you.

There is a part to make confuse me. I couldn’t understand the below two sentences, whether County is a random variable or a random factor.

1. “There is also a random factor here: County. It doesn’t look like it’s here, but it is. We use the term “random factor” and not “random variable” because random variables in a mixed model MUST be categorical. They are never covariates.”

2. ” So again, to summarize, in the random part of the model, we have:

· Two random effects: μ0 and μ1 for Time

· And one random variable: County”

What is County, a random factor or a random variable?

A factor is a variable. So County is both a random variable and a factor, because it’s a categorical variable.

Thanks for your comment. ^^