Much like General Linear Model and Generalized Linear Model in #7, there are many examples in statistics of terms with (ridiculously) similar names, but nuanced meanings.

Today I talk about the difference between multivariate and multiple, as they relate to regression.

Multiple Regression

A regression analysis with one dependent variable and eight independent variables is NOT a multivariate regression model. It’s a multiple regression model.

And believe it or not, it’s considered a univariate model.

This is uniquely important to remember if you’re an SPSS user. Choose Univariate GLM (General Linear Model) for this model, not multivariate.

I know this sounds crazy and misleading because why would a model that contains nine variables (eight Xs and one Y) be considered a univariate model?

It’s because of the fundamental idea in regression that Xs and Ys aren’t the same. We’re using the Xs to understand the mean and variance of Y. This is why the residuals in a linear regression are differences between predicted and actual values of Y. Not X.

(And of course, there is an exception, called Type II or Major Axis linear regression, where X and Y are not distinct. But in most regression models, Y has a different role than X).

It’s the number of Ys that tell you whether it’s a univariate or multivariate model. That said, other than SPSS, I haven’t seen anyone use the term univariate to refer to this model in practice. Instead, the assumed default is that indeed, regression models have one Y, so let’s focus on how many Xs the model has. This leads us to…

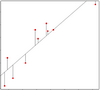

Simple Regression: A regression model with one Y (dependent variable) and one X (independent variable).

Multiple Regression: A regression model with one Y (dependent variable) and more than one X (independent variables).

References below.

Multivariate Regression

Multivariate analysis ALWAYS describes a situation with multiple dependent variables.

So a multivariate regression model is one with multiple Y variables. It may have one or more than one X variables. It is equivalent to a MANOVA: Multivariate Analysis of Variance.

Other examples of Multivariate Analysis include:

- Principal Component Analysis

- Factor Analysis

- Canonical Correlation Analysis

- Linear Discriminant Analysis

- Cluster Analysis

But wait. Multivariate analyses like cluster analysis and factor analysis have no dependent variable, per se. Why is it about dependent variables?

Well, it’s not really about dependency. It’s about which variables’ mean and variance is being analyzed. In a multivariate regression, we have multiple dependent variables, whose joint mean is being predicted by the one or more Xs. It’s the variance and covariance in the set of Ys that we’re modeling (and estimating in the Variance-Covariance matrix).

Note: this is actually a situation where the subtle differences in what we call that Y variable can help. Calling it the outcome or response variable, rather than dependent, is more applicable to something like factor analysis.

So when to choose multivariate GLM? When you’re jointly modeling the variation in multiple response variables.

References

In response to many requests in the comments, I suggest the following references. I give the caveat, though, that neither reference compares the two terms directly. They simply define each one. So rather than just list references, I’m going to explain them a little.

- Neter, Kutner, Nachtsheim, Wasserman’s Applied Linear Regression Models, 3rd ed. There are, incidentally, newer editions with slight changes in authorship. But I’m citing the one on my shelf.

Chapter 1, Linear Regression with One Independent Variable, includes:

“Regression model 1.1 … is “simple” in that there is only one predictor variable.”

Chapter 6 is titled Multiple Regression – I, and section 6.1 is “Multiple Regression Models: Need for Several Predictor Variables.” Interestingly enough, there is no direct quotable definition of the term “multiple regression.” Even so, it’s pretty clear. Go read the chapter to see.

There is no mention of the term “Multivariate Regression” in this book.

2. Johnson & Wichern’s Applied Multivariate Statistical Analysis, 3rd ed.

Chapter 7, Multivariate Linear Regression Models, section 7.1 Introduction. Here it says:

“In this chapter we first discuss the multiple regression model for the prediction of a single response. This model is then generalized to handle the prediction of several dependent variables.” (Emphasis theirs).

They finally get to Multivariate Multiple Regression in Section 7.7. Here they “consider the problem of modeling the relationship between m responses, Y1, Y2, …,Ym, and a single set of predictor variables.”

Misuses of the Terms

I’d be shocked, however, if there aren’t some books or articles out there where the terms are not used or defined the way I’ve described them here, according to these references. It’s very easy to confuse these terms, even for those of us who should know better.

And honestly, it’s not that hard to just describe the model instead of naming it. “Regression model with four predictors and one outcome” doesn’t take a lot more words and is much less confusing.

If you’re ever confused about the type of model someone is describing to you, just ask.

Read More Explanations of Confusing Statistical Terms.

First Published 4/29/09;

Updated 2/23/21 to give more detail.